Allen Institute releases Olmo 3, a fully open LLM family with transparent reasoning and lifecycle access.

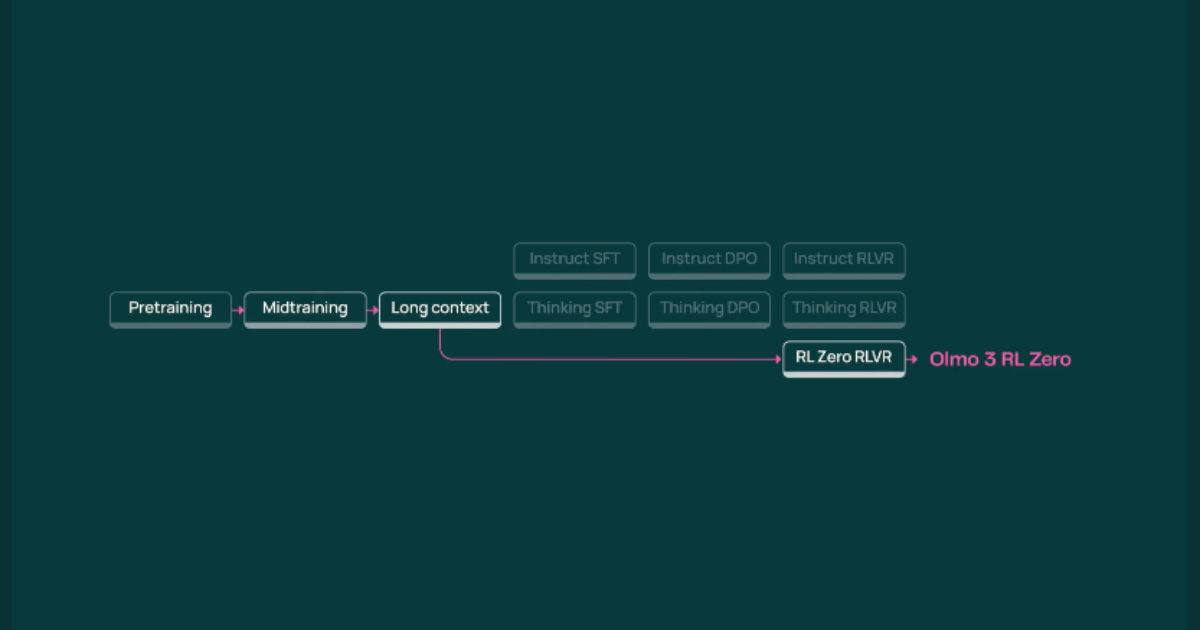

The Allen Institute for AI has launched Olmo 3, a new family of open-source language models that provide unprecedented transparency into the entire model lifecycle. Unlike most open-weight models, Olmo 3 allows developers to inspect intermediate reasoning steps, trace outputs back to training data, and experiment with post-training techniques such as supervised fine-tuning and reinforcement learning with verifiable rewards. The flagship Olmo 3-Think (32B) excels in multi-step reasoning and matches or outperforms leading open models on math and reasoning benchmarks. Smaller variants (7B) are optimized for coding, math, and instruction following, making them accessible for modest hardware setups.

A key innovation is the integration of OlmoTrace, a tool that enables real-time tracing of model outputs to their training data origins. This transparency empowers researchers and developers to understand, modify, and improve model behavior, fostering community-driven experimentation. The release also includes Dolma 3, a massive, high-quality pretraining corpus with strong decontamination and a focus on coding and math data. By making all models, datasets, and training artifacts openly available, Olmo 3 sets a new standard for open-source LLM development and research.

Source: InfoQ