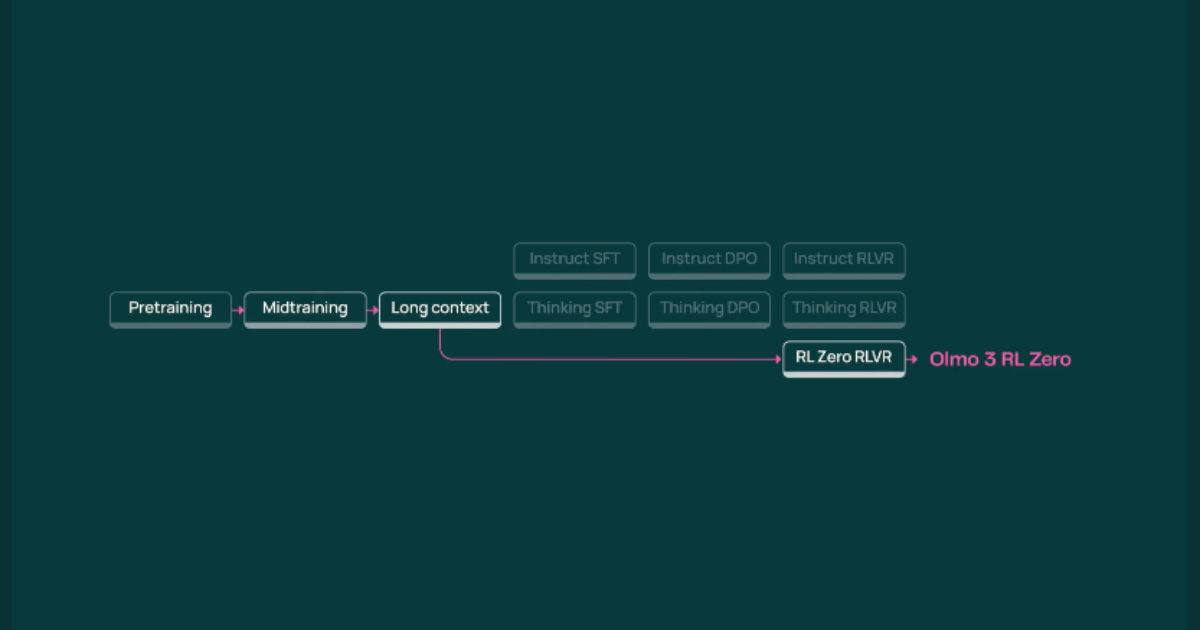

Olmo 3 debuts with complete model lifecycle transparency and versatile post-training for reasoning and instruction.

The Allen Institute for AI has launched Olmo 3, a family of open-source large language models providing developers with comprehensive transparency across the entire model lifecycle. Unlike typical language models offered as opaque snapshots, Olmo 3 enables detailed inspection of reasoning processes, dataset modification, and experimentation with post-training techniques such as supervised fine-tuning and reinforcement learning with verifiable rewards.

The flagship model, Olmo 3-Think (32B), is specialized for multi-step reasoning and allows traceability from outputs back to training data, enhancing trust and customization potential. Smaller 7B variants cater to instruction following, chat, and reinforcement learning research, combining strong performance with the ability to run on modest hardware. With permissive licensing of models, datasets, and artifacts, Olmo 3 supports open collaboration and innovation in AI research, education, and applied projects, marking a significant step forward in open-weight language model development.

Source: InfoQ